How Sensemaker turns messy speech into durable structure and usable drafts.

Sensemaker is built so you can record a stream of consciousness and still get usable results: people, projects, places, actions, due dates, worlds, relationship links, durable snippets, and outputs you can use immediately.

Capture on glasses (G2 + ring-first navigation)

Sensemaker is built to minimize friction at the moment of capture. Record quickly, speak naturally, and let the processing layer do the organizing.

Stream of consciousness is OK

You don't have to organize your thoughts while speaking. The AI processing layer extracts structure after the fact.

One topic or many

You can stay focused or ramble. Sensemaker can still extract tasks, requested outputs, things to remember, and other useful signals without requiring you to clean up the capture first.

Minimal on-glasses UI

The glasses UI is intentionally lightweight; Portal and the companion app are the places for review, cleanup, drafting, and follow-through.

AI processing layer

Sensemaker's value comes from turning speech into structured memory and usable drafts. Hosted workspaces include 30 trial credits. After that, add your own OpenAI API key in Settings to continue, or use a self-hosted/local-ingest setup if you prefer to run the stack yourself.

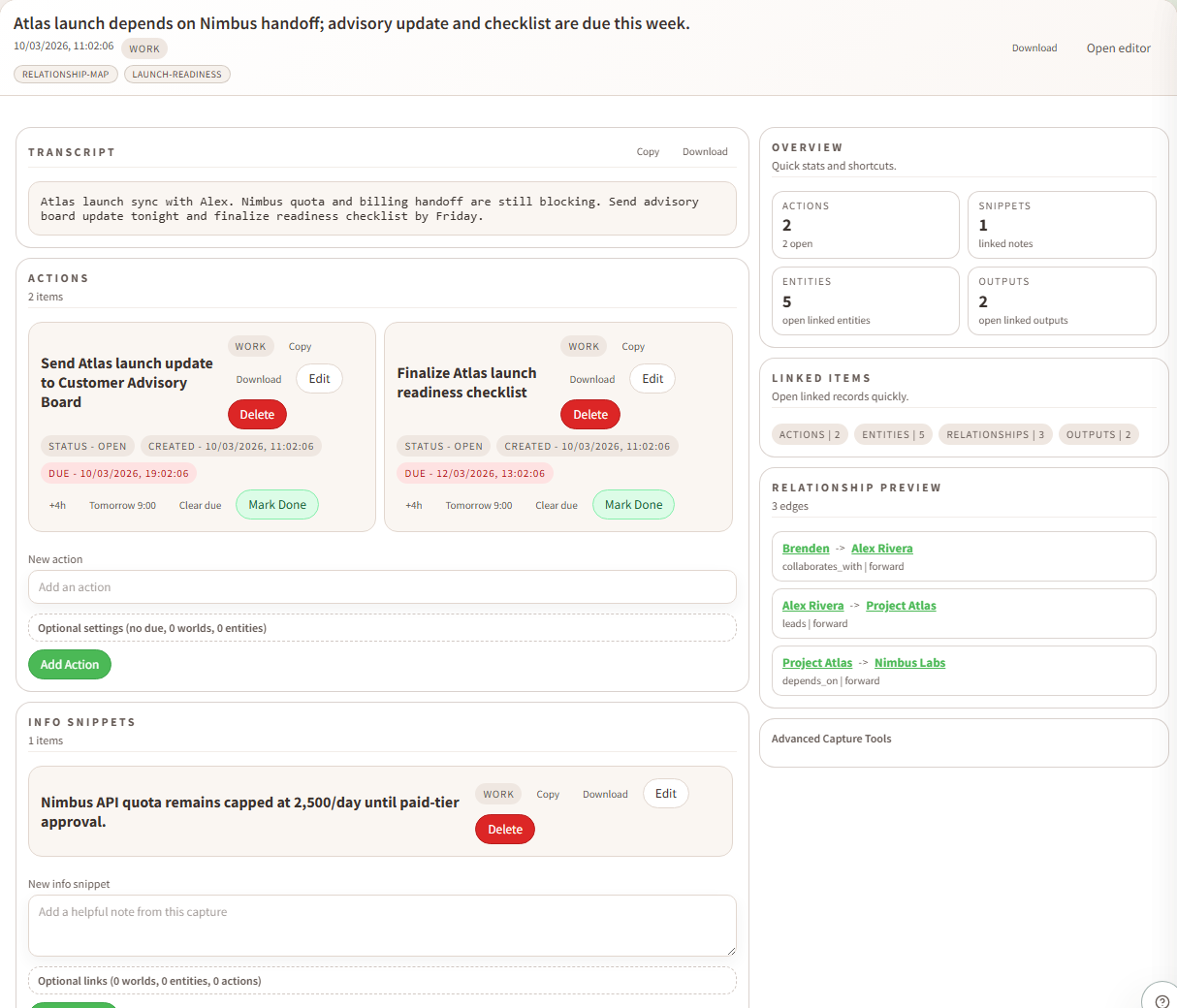

What gets extracted from a capture

From a single recording, Sensemaker can produce:

Context-rich interpretation

Sensemaker reuses what it already knows about your world (entities, relationships, worlds, and accepted context) so new captures are matched more consistently over time. As you review and correct suggestions, linking and drafting quality continue to improve.

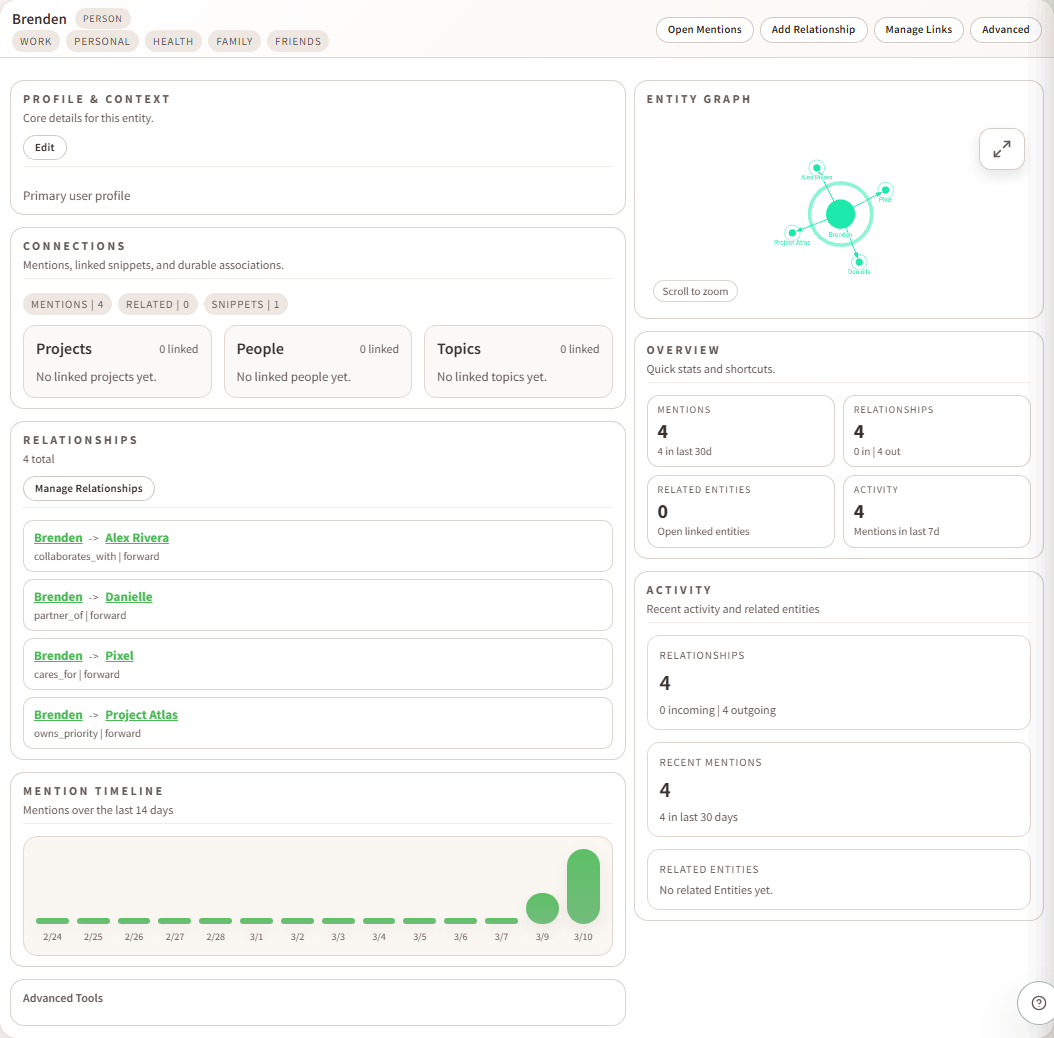

Entities + relationships (a personal knowledge graph)

Sensemaker keeps a durable, queryable graph of people, projects, topics, places, and tasks. Relationships connect entities with semantic meaning, confidence signals, and evidence from captures/snippets/actions.

Entities

People, orgs, projects, topics, places, and tasks referenced across captures.

Relationships

Edges between entities with semantics like category, strength, polarity, and lifecycle status.

Merge + dedupe

Keep one canonical entity over time while preserving aliases and evidence trails.

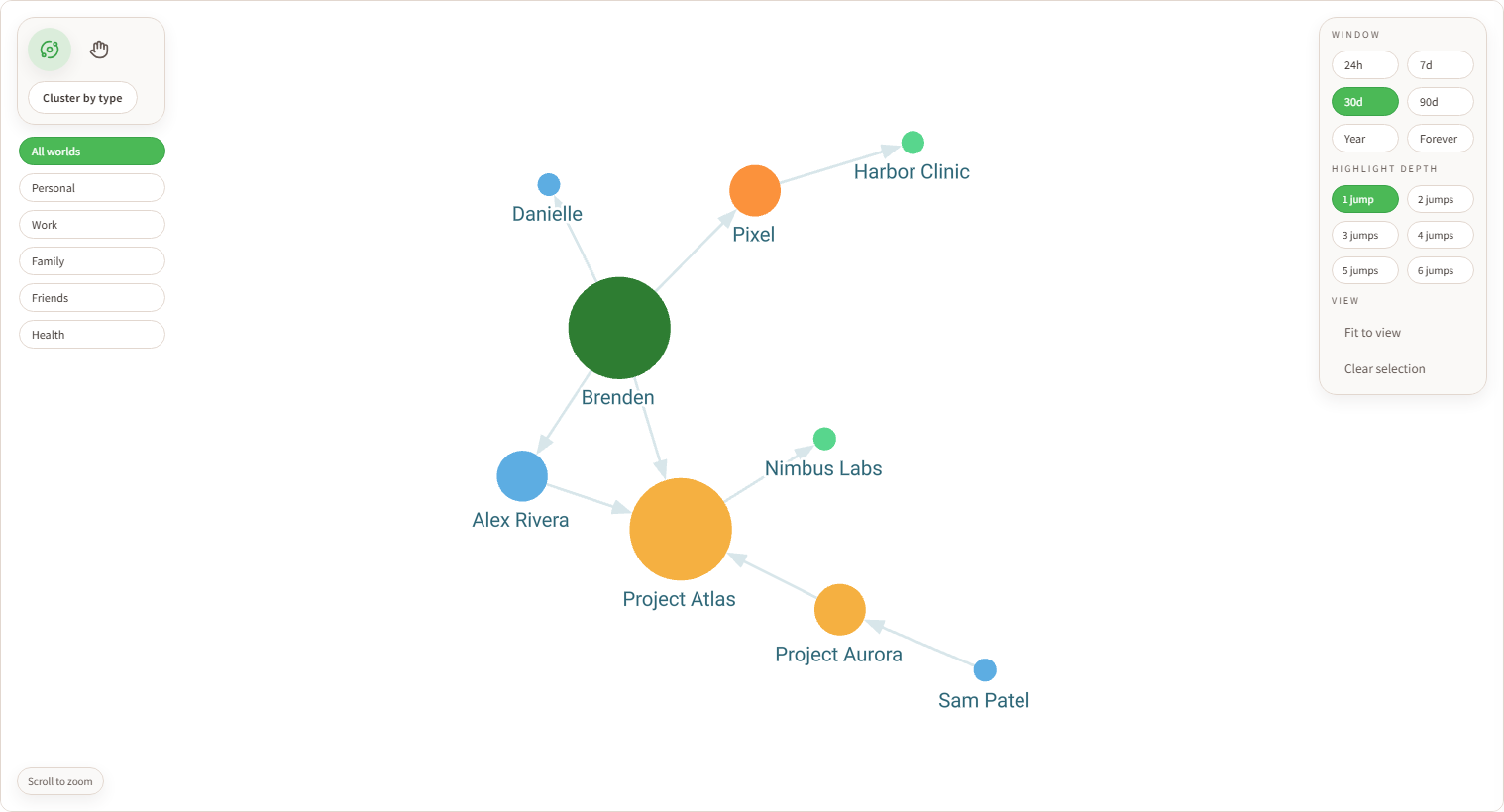

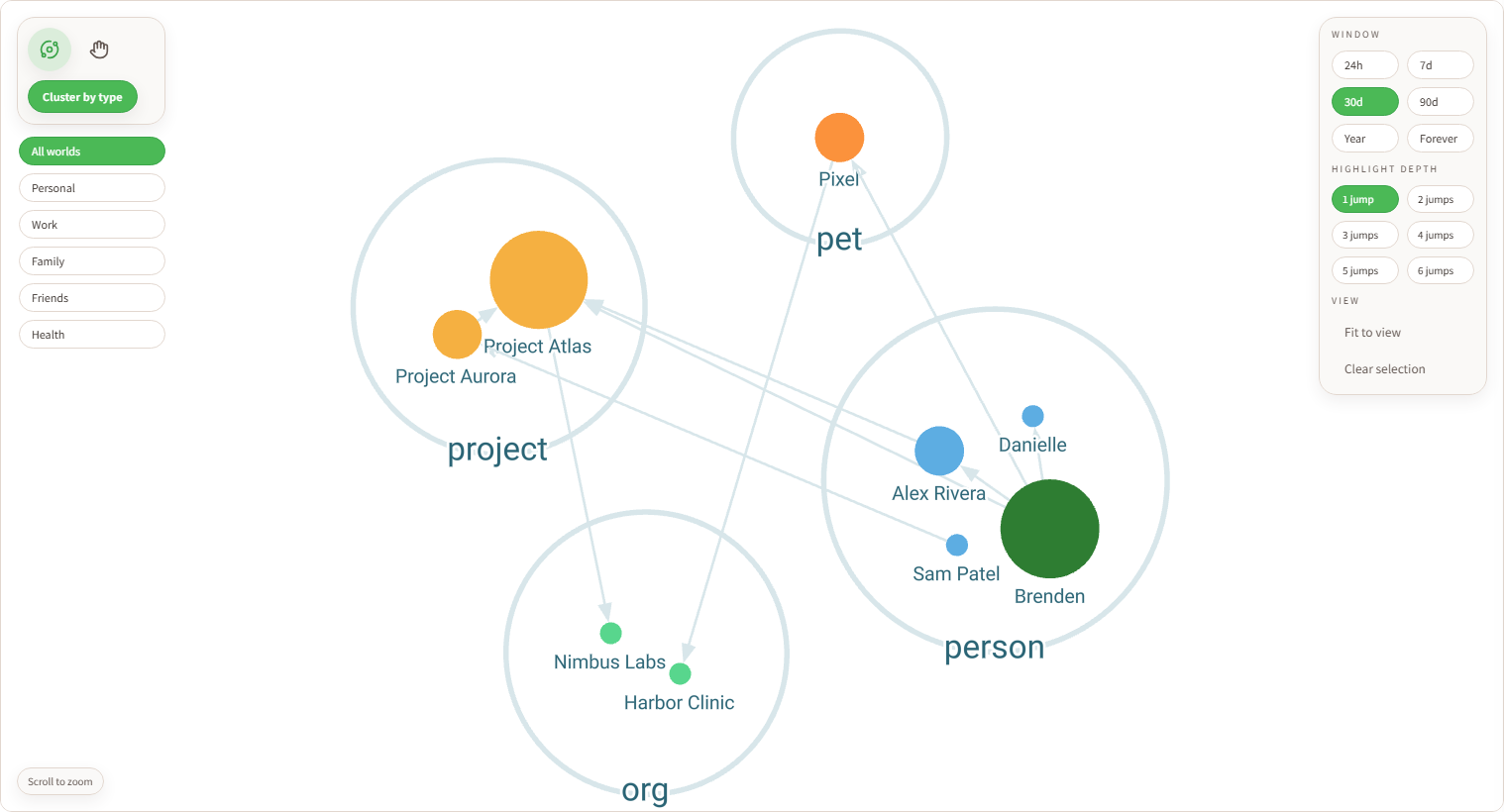

Data Viz (interactive relationship exploration)

Data Viz lets you explore your relationship graph directly: filter by world, change time windows, cluster by entity type, and adjust highlight depth to inspect local neighborhoods quickly.

World + window filters

Focus the graph by context lens and recency window to reduce noise.

Cluster + depth controls

Group by entity type and control highlight jumps for faster topology scanning.

Node drill-in

Select nodes to inspect related entities and jump directly to full entity detail.

Portal + Even Hub companion + Composer

The core Sensemaker experience is Portal for full review and drafting, plus the Even Hub companion app for G2 onboarding, capture, quick triage, and mobile follow-through.

Portal web console

Use Portal for richer review, cleanup, world management, settings, Data Viz, and deeper drafting workflows.

Even Hub companion

Use the companion app with the same account for G2 onboarding, capture review, actions, outputs, and quick cleanup on your phone.

Composer

Compose from captures, info snippets, actions, outputs, and entities. Start from a source, refine the brief, and turn memory into a draft you can actually use.

Typical flow

portal.sensemaker-app.com.Outputs (Sensemaker "completes" the next step)

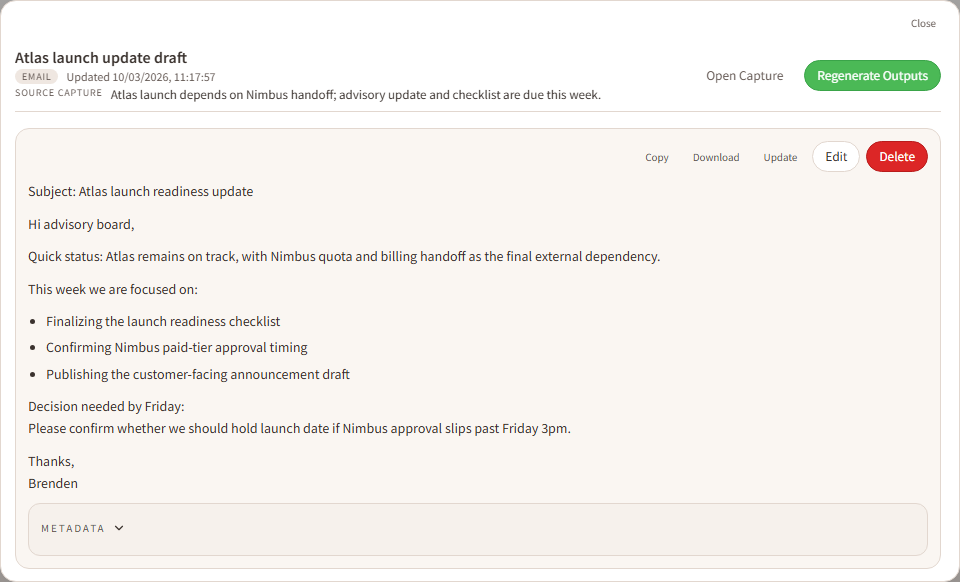

Outputs are draft artifacts generated from captures: emails, messages, docs, and custom types. They help move you from “I said I would do it” to “here’s the draft.”

Output types

System types (Email, Message, Doc) plus custom types with format hints (tone/structure).

Access anytime

Outputs live in a library and can be reviewed independently from captures.

Better with context

Foundational Context and Guidance improve drafting quality and consistency.

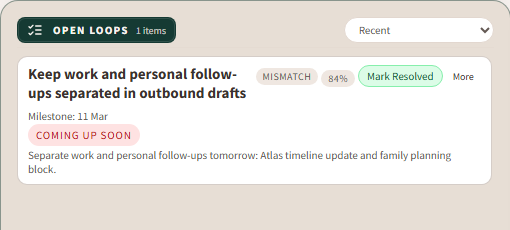

Open Loops (high-signal follow-ups)

Open Loops are actionable follow-up threads extracted from captures: questions, decisions, mismatches, and explicit gaps. They’re designed to be high signal, not generic “missing detail.”

Questions

Unanswered questions that drive a real-world next step.

Decisions

Places where you need to choose a path forward.

Mismatches

Contradictions or inconsistencies that should be resolved.

Worlds + Context + Guidance

Worlds are context lenses like Work, Family, or Friends for filtering and organization. Context and Guidance influence how captures are interpreted and how outputs are drafted.

Worlds

A capture can belong to multiple worlds. Worlds can also apply to derived items for better filtering.

Foundational Context

Durable background (name, time zone, acronyms, frequent people/topics) to improve interpretation.

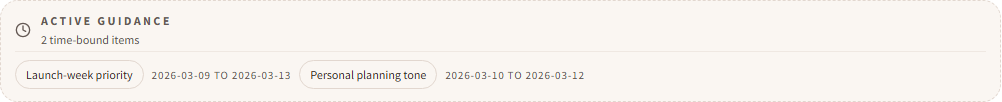

Guidance + Active Guidance

Short instruction-like context. Time-bound entries become Active Guidance while they're active.

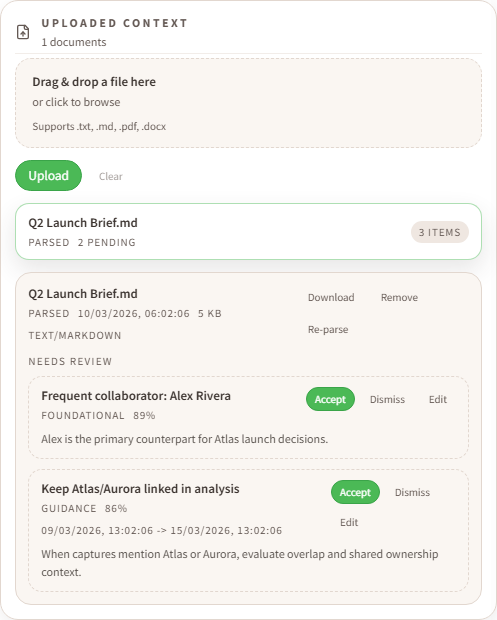

Context uploads + review

Upload docs like txt, md, pdf, or docx files. Sensemaker extracts key details into context so later captures and drafts can be more accurate and relevant.

Upload flow (beta)

Advanced integration: MCP

MCP integrations

MCP integrations

What it is

MCP is an optional integration surface for clients that can connect directly to a Sensemaker workspace. It is a good fit when you want your own AI tooling to read captures, outputs, entities, relationships, tags, and workspace summaries.

Integration paths

- Hosted MCP: managed access for hosted workspaces, with read-only access in this phase.

- Claude Desktop: use the Sensemaker MCP desktop extension for a local Claude Desktop connection.

- OpenClaw and similar clients: connect with the hosted endpoint and a bearer token from Settings.

- Self-host MCP: advanced local path for people running the stack themselves.

Good to know

The primary product path is Portal plus the Even Hub companion app. You do not need MCP for normal use.

Hosted MCP is read-only in this phase and is designed for managed access without local Docker or local ingest.

If you want localhost endpoints and full self-managed control, use the self-host beta stack:

Web Console: http://localhost:5173/

G2 Harness: http://localhost:5173/g2/

API: http://localhost:8788/

Ingest (local): http://localhost:8787/

MCP: http://localhost:8790/mcp

Setup guides: MCP + OpenClaw and MCP + Claude Desktop.

Hosted first, self-host optional

The main product lane is hosted: create your account in Portal, use the same account in the Even Hub companion app, and launch Sensemaker on your G2 glasses. Hosted workspaces include 30 trial credits. Self-host remains available as an advanced path if you explicitly want Docker, local ingest, or local MCP.

Hosted first steps

1. Open portal.sensemaker-app.com

2. Create your account or sign in

3. Install or open Even Hub on your phone

4. Sign in to Sensemaker in Even Hub with the same account

5. Launch Sensemaker on your G2 glasses

6. Complete onboarding and start capturingAdvanced path

If you specifically want local Docker, local ingest, or MCP on localhost, keep using the self-host beta docs. Those paths still exist, but they are no longer the default product story.